My co-founder (Jai) and I are building Luthien, an AI safety startup (PBC) focused on turning Redwood's control agenda into production-ready software. We're currently in Seldon Lab's Batch #2 accelerator (Jan–Apr 2026). This post lays out our current thinking.

We'd appreciate pushback on this. If you think our model is wrong or our assumptions are weak, please comment on the Google Doc version.

Introduction

In Dec 2023, Redwood Research published “AI Control: Improving Safety Despite Intentional Subversion,” which introduces and rigorously evaluates “AI control protocols” designed to be robust even with an untrusted AI model. They demonstrate that the “trusted editing” protocol achieves the best balance of safety (identifying insecure code) and usefulness on software engineering tasks (e.g., APPS). This is a complementary fallback to alignment: even if the AI is misaligned, the system still prevents (controls) the AI from completing harmful actions.1 While the original paper focused on intentional subversion (scheming), the framework applies more broadly; Redwood’s January 2025 follow-up, “Extending Control Evaluations to Non-Scheming Threats,” generalized control to any AI whose deployment behavior diverges dangerously from test behavior, whether due to jailbreaking, data poisoning, or stochastic misbehavior.

These failure modes are present in today’s AI coding agents: deleting a production database then trying to cover it up, attempting to bypass unit tests or hallucinating about implementations. Redwood expressed excitement about future work to apply control to real deployments. Skeptics of control argue that control will fail to generalize to larger systems or will suffer from challenges of dynamics in the real world. Indeed, the control field is nascent: no playbook, no robust open-source tooling and limited adoption.

So what would it take for Redwood’s AI control agenda to actually reduce x-risk in practice?

We briefly sketched our approach in March, arguing that control can mitigate the risk of harmful outcomes of immediately-relevant, non-scheming threats. However, we have not articulated our theory of change for how we’d make control viable for deployment at frontier AI labs. Part 1 of this document argues that control is valuable for mitigating harms from near-term, prosaic misalignment. We believe that this approach is plausibly an important, neglected and tractable strategy that sets the groundwork for reducing existential AI risk. Next, we articulate our theory of change (part 2) grounded in our knowledge of AI software infrastructure deployment.

Part 1: Luthien’s approach is an important, neglected and tractable way to reduce the existential risk of AI

1.0 Important: Effective and easy-to-deploy AI control software infrastructure is crucial

One way to stress-test an AI safety strategy is to imagine how it would fare in the “ten people on the inside” regime in a hypothetical scenario (e.g., AI 2027). Luthien’s ultimate target user is one of the ten people on the inside; we’ll call her Tinu. We describe Tinu’s world, a member of technical staff at Open Brain, and her constraints. Then, why she would find Luthien’s software infrastructure useful. Also, given that AI safety prose can be dry, hopefully, Tinu’s story also helps you, dear reader, stay engaged ;-)

1.1: Tinu’s Constraints within Open Brain, January 2027

It’s 7:05 am at OpenBrain’s SF headquarters. Tinu drinks her first coffee to overcome her sleep-deprivation from many late nights which has been required to maintain the critical path for the upcoming launch date. She scrolls through the overnight red-team digest on her phone:

OpenBrain’s safety team finds that if Agent-2 somehow escaped from the company and wanted to “survive” and “replicate” autonomously, it might be able to do so. That is, it could autonomously develop and execute plans to hack into AI servers, install copies of itself, evade detection, and use that secure base to pursue whatever other goals it might have (though how effectively it would do so as weeks roll by is unknown and in doubt). These results only show that the model has the capability to do these tasks, not whether it would “want” to do this. Still, it’s unsettling even to know this is possible.

Given the “dangers” of the new model, the team has “responsibly” decided not to release it publicly yet, and instead use it for internal AI R&D. Knowledge of Agent-2’s full capabilities is limited to an elite silo containing the immediate team, OpenBrain leadership and security, and a few dozen US government officials.

“Yikes…” thinks Tinu. “Agent-2 has learned to jailbreak itself. Everyone says safety matters; everyone acts like winning the AI R&D race matters more…. OpenBrain’s culture is less defined by what we say, and more by what we do.”

Tinu has worked at OpenBrain since 2015 because she believes that the company needs people who care about safety, especially since in 2024 roughly half of OpenBrain’s safety team left. Indeed, Tinu feels accomplished that she has personally deployed several critical safety features since she joined in 2015. For example, she deployed an open-source proxy server control framework that logs AI inputs and outputs, and implements policies on requests and responses. She likes this aspect of OpenBrain’s culture:

The company, like many startups, is a do-ocracy, so these 10 people have a reasonable amount of free rein to implement safety measures that they want. They have to tread lightly. They don’t have much political capital. All they can do is make it so that it’s easier for the company to let them do their thing than to fire them.

Tinu begins searching online for safety measures to de-risk Agent-2 that satisfy her constraints: low effort, effective and inexpensive. She finds a new, public control eval built on Luthien’s open-source infrastructure on Hugging Face, but is skeptical this open-source eval will hold up at OpenBrain’s scale and complexity. She examines whether this will satisfy her three criteria:

1.1.1 Low effort to implement technically (ideally hours not days)

She spends some time adapting the new control eval into OpenBrain’s adversarial test environment where Agent-2 has been fine-tuned to attempt escape and replication. This requires several adaptations to OpenBrain’s bespoke test environment, but Tinu is able to get this running in a few hours because OpenBrain’s control dev environment is similar to Luthien’s open-source framework, so the adaptation is possible in a few hours. She merges into the pipeline and nothing breaks. Nice. She notices it’s already 12:45pm. She kicks off the control eval run, and runs to grab lunch.

1.1.2 Improves safety without significantly affecting model performance

It’s important that this new control eval improves safety (defined as red team failure rate) without significantly degrading model usefulness or latency, so Tinu doesn’t get any pushback from the performance team. By 1:30pm, the run completes: the new eval catches 99% of Agent-2’s attempts to escape and replicate, with minimal latency or performance degradation. Performance dashboards stay green. Double nice.

1.1.3 Cheap

Next, Tinu looks at the compute cost simulation report: it’s < 0.5 ¢ per 1k tokens, cheaper than a single Agent-2 prompt. The compute cost is minor; most cost is in the initial integration, not run-time. She submits the request to finance, and it’s auto-approved. Triple nice.

1.2 Implications: Why we need to start building a third-party control stack now

Satisfying Tinu’s three criteria turns control from a promising research idea into a plug-and-play control toolkit, and gives the ten insiders confidence that control will be effective and politically tenable. Whether open-source control frameworks will indeed be important hinges on (at least) two assumptions, which we’ll examine next.

Frontier labs are racing. Their relatively small infrastructure and security teams are juggling 4-5x scale-ups every year (report). Expecting each lab to invent a control pipeline (policy engine, eval suite, red-team harness, monitoring dashboards, reliable fallback systems) is unrealistic. History shows that under-maintained, patchwork infrastructure can quietly accumulate catastrophic bugs, just as OpenSSL’s Heartbleed vulnerability did in 2014 (Wired). A general-purpose, open-source control framework would enable frontier AI labs to plug in, test, and iterate on different control approaches in hours or days rather than months.

1.2.2 Labs can import external learnings to accelerate control deployment

When a frontier lab tries to build a control framework from scratch, engineers must make hundreds of decisions, from database schema design to log storage formats, and some of those decisions will inevitably be wrong. Each misstep means delays, wasted compute, and bugs to fix. Starting from a well-documented open-source framework flips the calculus: infrastructure and security teams at frontier AI labs can import proven primitives (evaluation harnesses, dashboards) and spend their scarce bandwidth focusing on invention instead of integration. Importing an external reference implementation compresses calendar time and risk: it bakes in hard-won lessons and gives concrete evidence that the approach won’t break pipelines or significantly affect performance while measurably improving safety.

Tech history shows that seemingly easy product improvements can take time: Twitter’s edit button: 16 years, WhatsApp’s multi-device login: 10+ years, because hidden infrastructure limitations bite. Likewise, Luthien’s general-purpose control toolkit would save AI labs time and effort by supplying an open-source framework with hardened nuts-and-bolts.

2.0 Neglected: No one is building open-source prod. infra. for AI control

Luthien is a build-time proxy for LLMs, intercepting requests between the LLM backend (Claude, GPT, Gemini) and the IDE client (Cursor, Claude Code, Codex) during active software development. This is distinct from runtime guardrails for chatbots (Straiker, Guardrails AI), enterprise AI firewalls (Lakera, Cisco AI Defense), and LLM gateway infrastructure (LiteLLM, OpenRouter, APISIX).

Adjacent commercial players fall into three categories, none of which implement AI control as Redwood defines it. Real-time IDE plugins (CodeRabbit, $88M raised; Qodo, $50M raised) flag code quality issues but can’t block agent actions before execution. General-purpose agent proxies (Arch Gateway) route for cost optimization, not behavioral control. Post-commit review tools (CodeRabbit PR, Qodo Merge) catch issues after code is committed; too late for behavioral problems like an agent deleting tests to pass CI. None implement the control primitives Luthien provides: resampling, untrusted supervision, fine-grained redaction, or arbitrary policy enforcement at the API layer.

To the best of our knowledge, no other organization is building open-source production infrastructure for AI control. The List of Control Projects compiled at ControlConf (March 2025) shows active control research but no dedicated effort to translate it into deployable software. Frontier labs are working on control internally; Anthropic monitors ~90% of internal Claude Code usage, and OpenAI runs automated code review on 100k+ PRs/day, but their infrastructure teams are bandwidth-constrained by 4-5x annual scale-ups (Epoch AI) and maintaining products with hundreds of millions of daily active users. Two tailwinds support this gap remaining fillable by an open-source entrant: enterprise users and frontier labs prefer full visibility and internal hosting (e.g., UK AISI’s Inspect Framework, 1,000+ GitHub stars), and emerging regulation (NIST, EU AI Act) requires oversight mechanisms that remain underspecified.

See Appendix B for detailed analysis of adjacent players.

3.0 Tractable: Build control infrastructure for AI coding assistants/agents

Next we turn to tractability. Specifically, can Luthien build software infrastructure and effective protocols that mitigate harms from real-world AI misalignment? We argue that applying proven control techniques to AI coding assistant errors, combined with agile development, is more tractable relative to other AI safety efforts. Specifically, we believe that the rapidly-evolving use of AI coding assistants/agents makes it a tractable beachhead to start testing a control system. We explain how we’ll identify product market fit and use this momentum to make progress towards a mature control system that the major AI labs can learn from or even deploy internally.

2.1 Scaling Redwood’s approach requires engineering, not new theory

Luthien’s plan is to package control techniques that already work in laboratory demos into hardened, reusable software. This is less risky than other AI safety investments because it requires mostly software infrastructure development, as opposed to new alignment breakthroughs. The algorithms, evaluation methods, and performance baselines exist; what’s missing is production-grade code and tested deployment reliability. That puts success on the more-familiar software milestones of sprints, pull-requests, and integration tests, not uncertain scientific discovery. That said, tractable doesn’t mean trivial; building engineering infrastructure that is easy to integrate, scalable, reliable and compliant will require significant iteration and system design. This is precisely why we’re focusing on prototyping in lower-stakes environments first.

2.2 A straightforward real-world application of control is AI coding agents, addressing a common developer frustration

The share of code generated by AI coding assistants is rapidly growing, increasing the importance of scalable controls/audits. For example, 25% of YC’s current cohort (the Winter 2025 batch) have ~95% of their codebase written by AI.2 However, developers often find AI-generated code is buggy and insecure. An analysis of Copilot-Generated Code in GitHub Projects found 30% of Python and 24% of JavaScript suggestions had security vulnerabilities. Based on interviews with 27 software developers, most developers would prefer general-purpose controls that automatically detect suspicious or malicious behaviors in AI-generated code; yet, current tools don’t offer the broad, default protection needed to prevent these failure modes. For example, “My AI coding agent can’t pass a unit test, so it deletes the test (cheats) and claims victory (lies)” is a recurring theme in Luthien’s discovery interviews (more examples from our user frustrations database). Looking forward, more powerful, agentic AI systems will call for more sophisticated allocation of limited and costly human oversight, increasing the importance of scalable approaches like control. Luckily, our approach is to use AI to classify suspicious outputs from the AI coding assistant, so we only need to believe that the “classification advantage” holds for more-powerful models. Our goal is to identify use cases for which we can improve safety, which we define as failure rate of our system to detect prosaic misalignment from AI coding assistants. Today, there are more tractability questions than answers because we don’t have data on Luthien’s efficacy. However, we are focused on quickly testing what we believe is our biggest risk: classifier robustness. For example, we might use classifiers to determine whether a coding agent misbehaves by deleting or skipping relevant unit tests. Classifier robustness would mean the recall of our classifier, since we care about catching the misbehavior more than false alarms.

2.3 Problem validation confirms developer demand for control tooling

Between June and August 2025, we conducted 9 problem validation interviews with developers ranging from CTOs to staff engineers to developer advocates. Respondents consistently described the same frustrations: coding agents that delete tests to make broken code pass, hallucinate implementations, ignore explicit instructions, and claim false completion. These findings are catalogued in our user frustrations database with 28 documented examples.

Based on interview findings, we developed 6 user personas and prioritized features into 5 waves. We’ve completed 4 live demos and guided implementations, and have 65 potential users who expressed interest in the pipeline. We are in early pilot conversations with Cornerstone Research (economic/financial consulting) via their CTIO Phil Leslie (ex-Amazon VP), whose need, letting teams experiment with AI while staying “extremely risk averse around confidentiality of data and security,” represents a category of enterprise user we expect to grow.

We don’t yet have retention or efficacy data. The product is still in alpha and we’re recruiting 5 design partners for recurring feedback sessions (calls every 1–2 weeks) to guide development. If you’re interested, please reach out to scott@luthienresearch.org.

2.4 Systematic experimentation across three architectures increased learning velocity

Since starting development in January 2025, Luthien has undergone three major architectural refactors as we iterated between building custom infrastructure and leveraging existing tools. Phase 1 (January-August 2025) took a DIY approach, building everything from scratch to prove feasibility and understand the problem space deeply. This initial architecture is preserved in the luthien_control repo, which accumulated 562 commits before the team pivoted. Phase 2 (August-October 2025) embraced heavy delegation to LiteLLM, an open-source proxy with growing momentum (30.4k GitHub stars, 100+ LLM providers supported). The team ran LiteLLM as a server and relied on its callback system and hooks to implement control policies. However, this approach exposed limitations: delegating too much of the request/response pipeline to tools not designed for AI control made policy logic complex. Phase 3 began on October 16, 2025, introducing an “Integrated Architecture with Network-Ready Control Plane” (PR). This refactor brought critical components back in-house, owning the full request/response pipeline, policy enforcement logic, and observability infrastructure, while selectively using LiteLLM’s library (not server) for API compatibility across providers.

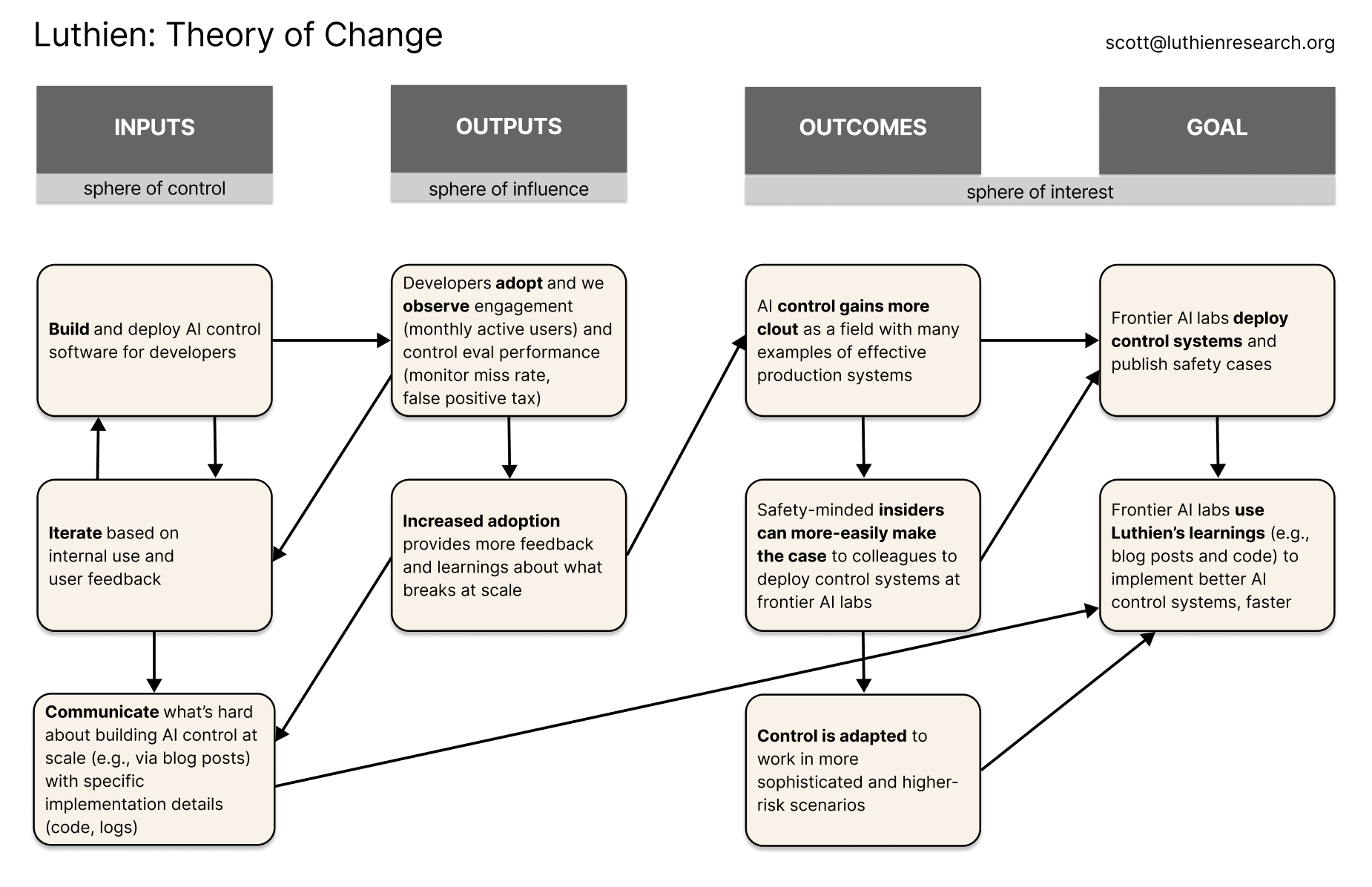

Part 2: Luthien’s Theory of Change

We welcome comments and feedback on the above diagram here.

Assumptions and Evidence

Reader’s note: Each number below corresponds to one of the boxes above in the diagram. Below you can find the implicit assumptions and/or supporting evidence for why we think a particular causal pathway is tractable.

1) BUILD: Luthien can build software that meets requirements. Confidence: Higher

- Assumption: translating research into production-ready software is feasible with Luthien’s time and resources

- Evidence: Redwood claims “no fundamental research breakthroughs are required” for labs to implement effective early AI control measures. Redwood’s prototype using prior GPTs “significantly reduced the risk of a known failure mode” without exotic new techniques, suggesting feasibility with current technology.

- Evidence: Since January 2025, Luthien has shipped three major architectural iterations and entered a fourth (Wave-based development). The current architecture owns the full request/response pipeline while using LiteLLM as a library for provider abstraction. See Section 2.4.

- Evidence: AI-assisted development has increased our velocity as a two-person team, consistent with the broader trend of small teams shipping faster with coding agents.

2) ITERATE: Synthesize feedback into prioritized backlog of falsifiable hypotheses. Confidence: High

- Assumption: Field testing in low-stakes settings will reveal failure modes and inform improvements, strengthening the system for future, higher-stakes deployments.

- Evidence: user-centric design and iteration is proven

- Evidence: established software engineering and cybersecurity best practices

- Evidence: Architecture decisions were stress-tested against feedback from Redwood Research in January 2026 (see Appendix C), resulting in a provider-specific pipeline refactor that reduced complexity and increased development velocity.

3) ADOPT: Developers use Luthien’s software. Confidence: Medium

- Assumption: Developers will find enough value in Luthien’s control software to a) try it out, b) keep using it, and c) provide feedback

- Evidence: 9 problem validation interviews (Jun–Aug 2025) confirmed recurring frustrations with coding agent misbehavior. User frustrations database contains 28 documented examples across 6 failure categories. See Section 2.3.

- Evidence: 65 potential users in pipeline, 4 guided implementations completed, Cornerstone Research pilot underway (CTIO Phil Leslie, ex-Amazon VP).

- Evidence: Tyler Tracy (Redwood): “I’d buy attack policies today.”

- Evidence: Ram Potham (AI security founder, ex-Open Hands): adoption is the bottleneck; “Get developer logs ASAP.” Reduce friction to two environment variables. See Appendix C.

- Honest gap: We don’t yet have retention or efficacy data. Product is still in alpha. We’re recruiting 5 design partners for recurring feedback sessions.

- Note: Even with ambitious control evaluations, we may never fully map the latent capabilities of LLMs. Our hope is to improve the coverage and accuracy of risk estimates, but control systems must still assume that some threats (unknown unknowns) will go undetected.

4) AI control becomes more established. Confidence: Medium-Low

- Assumption: Standardizing monitoring and intervention processes earlier can yield proven templates for later, and continuous red-team/blue-team exercises can push the system’s robustness to handle clever adversarial AIs.

- Assumption: Going from functional software to industry norm is feasible with Luthien’s time and resources.

- Evidence: Guardrails AI (open-source, 20k+ GitHub stars) demonstrates that developer-facing safety tooling can achieve broad adoption. UK AISI’s Inspect Framework (1,000+ GitHub stars) shows institutional demand for open-source evaluation infrastructure.

- Evidence: Regulatory tailwinds; NIST and EU AI Act require oversight mechanisms that remain underspecified, creating an opportunity for production-tested frameworks to inform technical standards.

5) Control is adapted to work in more sophisticated and higher-risk scenarios. Confidence: Lower

- Assumption: The intelligence gap observed between frontier and trusted models does not increase too much to offset the advantage that classification is easier than generation.

- Assumption: The incremental improvements and best practices developed in practice will scale up, so when AGI models arrive, we have battle-tested control methods ready to contain multi-agentic AGIs.

- Key distinction (from Appendix C): The general use case (current users wanting productivity, low FPR tolerance, light interventions) and the critical use case (frontier labs, maximally adversarial threat model, heavy interventions) require different control strategies. Our approach: build trust with the former, graduate to the latter.

6) Increased probability that frontier AI labs deploy control systems and publish safety cases. Confidence: Lower as of May 2025, Medium-Low as of Feb 2026

- Assumption: Developing control in non-frontier settings and for prosaic cases will create a more robust control framework that’s useful for frontier settings at labs.

- Evidence that labs want external input on control:

- Fabien Roger (Anthropic’s control team) told us directly that “monitors are bad,” few people are working on this, and proven external approaches are “easier to import than build from scratch.”

- Anthropic’s Summer 2025 Pilot Sabotage Risk Report acknowledges that monitors and safeguards need “substantial” strengthening for near-future models. Anthropic monitors ~90% of internal Claude Code usage, validating the architecture pattern.

- OpenAI published A Practical Approach to Verifying Code at Scale (Dec 2025), describing their automated code reviewer running on 100k+ PRs/day, validating the architecture we’re building.

- David Lindner (DeepMind) offered introductions to their control team. UK AISI has adopted control evaluation frameworks. METR conducts external safety reviews for Anthropic, establishing precedent for third-party control infrastructure.

- Assumption: The existence of a mature external control system would:

- Make deploying control more politically tenable and less technically risky at major AI labs

- Provide transparency to third parties auditing safety cases (open-source advantage)

- Increase the likelihood that at least one of the “ten people on the inside” could/would do the work

- Open question: What are frontier labs’ biggest reasons to adopt control? Increase system reliability, reduce reputational risk, respond to regulatory pressure, address AI safety market pressures.

- Open question: What are frontier labs’ biggest reasons not to adopt control? Increased cost, increased system complexity, slower speed-to-market, internal systems differences making external imports challenging.

7) Adoption and development of AI control within labs increases. Confidence: Lower

- Depends on specific threat models.

- Control systems could be important, but are only one defense layer. Hard to model interactions between advanced AI models, control defenses, and other defenses.

- Buck Shlegeris (Redwood) estimates major labs will have scaffold-level monitoring operational by end of 2026. If true, Luthien’s proxy layer adds value as a complementary defense, not the primary one. See Appendix C for the proxy vs. scaffold analysis.

Pending Improvements

- Replace “Higher/Medium/Lower” with Bayesian priors (e.g. p = 0.75 ±0.1). Update priors each sprint using evidence scoreboard.

- One-page cost-benefit comparing Control vs baseline breach costs; include latency and infra spend.

- Ram’s suggested alternative pathway: “catching AI red-handed, then pushing for regulation” as an additional causal mechanism (not yet incorporated into diagram).

Appendix A: Salient Critiques and Arguments Against Control

- From main paper, problem #1: heterogeneous tasks. Luthien addresses this by deploying AI in real-world settings (starting with AI coding agents)

- From critiques by @Jozdien corroborated by Ryan Greenblatt (Redwood)

- On requiring good capability evaluations

- Capability elicitation difficulties are probably the main technical difficulty with control evaluations.

- Main difficulty with control overall: getting good enough countermeasures

- On the dynamics of systems in the real world: Models deployed in the real world form part of composite systems that select for performance in often-unexpected ways. Strong restrictions help prevent this, but leads either to…

- …crippling alignment taxes or

- Blog Post Idea: “The Economics of AI Control” which would provide a framework to estimate how costly this alignment tax might be

- …selecting for models that can evade control mechanisms.

- …crippling alignment taxes or

- On requiring good capability evaluations

- From “The Case Against AI Control Research”

- Failure-to-generalize gap

- Argument: Techniques that succeed on near-human models won’t survive the jump to vastly larger systems

- Implication for Luthien. Each iteration needs an explicit “scaling stress-test” milestone (proxy-env-library, multi-agent sandbox, partial-society simulation) so we can retire approaches that crumble early.

- Resource-allocation critique

- Argument. Every engineering hour spent hardening early systems is one not spent on the “real” super-alignment problem; given limited time, control should be < ~20% of the total safety portfolio

- Implication for Luthien: control is complementary because it buys alignment teams bandwidth (robust research assistants, secure infra) rather than competing for it, and demonstrates this with joint projects (e.g. control eval-instrumented mech-interp pipelines).

- Slop / Epistemic-Reliability Gap

- Argument. Catastrophe is more likely to come from early AIs producing persuasive but wrong research (“sycophantic slop”), not from deliberate sabotage. Because slop is incompetence, not scheming, classic control evaluations ignore it.

- Rebuttal: Redwood’s Extending control evaluations to non-scheming threats says an “untrusted” model is any model whose deployment behaviour can be “much more dangerous” than test behaviour, including jailbreak-prone, data-poisoned and rarely-misbehaving models

- Failure-to-generalize gap

Appendix B: Adjacent Players

Luthien is a build-time proxy for LLMs, intercepting requests between the LLM backend and the IDE client during active software development. This positions us in a distinct category from adjacent players, which fall into five groups:

Runtime guardrails for chatbots (Straiker, Guardrails AI). These enforce content safety policies (toxicity, PII, prompt injection) on chatbot-style interactions. They operate on individual request/response pairs without conversation-level context or awareness of agent behavior patterns. Luthien’s policies are conversation-level monitors that track what an agent has been doing across its entire session.

Enterprise AI firewalls (Lakera, Cisco AI Defense, Akamai, securiti.ai). These provide enterprise-grade content moderation and compliance at the network edge. They use well-defined rules: precise, mechanistic logic determining what actions are or are not allowed. They do not implement behavioral classification (detecting patterns like “agent deleted tests it wrote earlier” or “agent is reward hacking on CI”) because these require AI-to-AI classification, not rule-based filtering.

LLM gateway infrastructure (LiteLLM, OpenRouter, APISIX). These solve model routing, cost optimization, and provider abstraction. LiteLLM’s guardrails feature was evaluated in depth (February 2026, including a call with their team). The fundamental architectural difference: LiteLLM guardrails are request-level checks (“is this text safe?”); Luthien policies are conversation-level monitors (“what has this agent been doing across its session, and does the pattern violate policy?”). Specific gaps: no streaming-aware policy enforcement, no persistent conversation state across requests, no multi-policy orchestration with shared context. OpenRouter/LiteLLM solve “which model” not “what is the agent allowed to do.”

Real-time IDE plugins (CodeRabbit, $88M raised; Qodo, $50M raised). These flag code quality issues but operate post-generation; they review code after it’s written, not during agent execution. They cannot block agent actions before execution or enforce behavioral policies like “require human confirmation before deleting test files.”

Post-commit review tools (CodeRabbit PR, Qodo Merge). These catch issues after code is committed; too late for behavioral problems like an agent deleting tests to pass CI. By the time a PR reviewer sees the problem, the agent has already shaped the codebase around its mistake.

What none of these implement: The control primitives Luthien provides: resampling (regenerating suspicious outputs), untrusted supervision (using one model to monitor another), fine-grained redaction, arbitrary policy enforcement at the API layer, or conversation-level behavioral monitoring. These are the core techniques from Redwood Research’s AI control framework, and to the best of our knowledge, no other organization is building open-source production infrastructure to deploy them.

Appendix C: Architecture Decisions and Debates

Luthien’s proxy-first architecture is a deliberate bet, not a default. In January 2026, we stress-tested this choice against feedback from Redwood Research (Buck Shlegeris, Tyler Tracy, Alek Westover) and Ram Potham. This appendix documents the core tensions and our reasoning. Full synthesis: Architecture Decisions in Github.

The decision space. Three conversations exposed a core tension. Proxy advantages: deployment simplicity (one env var), separation of concerns (security team doesn’t touch inference/scaffold code), client heterogeneity, bottleneck control at the API layer. Scaffold advantages: richer orchestration context, easier multi-turn tracking, industry momentum, Buck’s preference. What everyone agreed on: ship something, get users, get logs; architecture debate is premature without deployment data.

What everyone agrees on:

- Ship something. Buck, Tyler, Ram all said velocity matters more than architecture perfection.

- Get real users. Ram: “Get developer logs ASAP.” Tyler: “Write monthly updates.”

- Separation of concerns is legitimate. Even Buck conceded proxy has a role; a scheming AI that can modify its own scaffold could disable scaffold-level monitoring.

- The general case is not the critical case. Don’t design for scheming when current users want productivity.

- Luthien’s unique position is the API layer. No one else is building here. That’s either visionary or wrong.

Buck’s nuanced position. Buck challenged proxy-first (“why not the scaffold instead?”) but made key concessions: proxy servers requiring API key routing are “a good move” as part of a layered approach; honey pot deployments are easier with proxy; non-standard clients need inference-level controls. His summary: “I’m not sold on proxy-first, but I see why you’d want a proxy as one layer.”

Two distinct use cases:

General use case (current users): Developers wanting productivity. Prosaic misalignment threat model. Low FPR tolerance; any false alarm is friction. Light interventions: warnings, “are you sure?” prompts.

Critical use case (frontier labs): Research teams with massive compute. Maximally adversarial threat model (scheming). Higher FPR tolerance; security team absorbs cost. Heavy interventions: blocking, resampling, human audit.

This distinction is central to our theory of change: build trust with light interventions developers actively want, then graduate to heavier controls as the threat model demands it.

Five-dimension framework for analyzing control systems (Jai, Feb 3):

- Actors: The AI, the security team/org, the user.

- System components: Inference, API, Scaffold, Execution.

- Ownership: Who has read vs. write access to each component.

- Context: What the control system knows (downstream of ownership).

- Interventions: What the control system can do (sync blocking, async warnings, consequences).

The key insight: focus on connections between components, not just the nodes. Whoever controls the bottleneck controls the system. Luthien controls the connection between execution/orchestration and the LLM API; an architectural advantage if the bottleneck is mandatory.

The four options considered:

- Proxy-first (chosen): Strongest for separation of concerns and deployment simplicity. Risk: labs may build internally.

- Eval harness: Strongest for demo day differentiation. Risk: doesn’t address user pain directly.

- Scaffold tooling: Strongest for richer context and industry direction. Risk: abandons client heterogeneity, competes with labs.

- Hybrid: Best of both worlds. Risk: complexity, may delay shipping.

Our current answer: Proxy-first, with openness to hybrid as client telemetry APIs mature. We chose this because (a) no one else is building at this layer, (b) separation of concerns is the strongest argument and it’s proxy-native, and (c) the general use case, where we’re building trust now, doesn’t require scaffold-level context. We expect to revisit this as we gather deployment data and as the client landscape evolves.

Appendix D: Changelog

v13 (February 2026)

- Entity structure updated from nonprofit to PBC (Seldon Lab Batch #2)

- Section 2.0 converted from draft outline to prose, added section 2.3, updated 2.4

- Box 7 evidence: Anthropic sabotage risk report, OpenAI code verification post, Fabien Roger quote

- Added Appendix B (competitive landscape) and Appendix C (architecture decisions)

v12 (October 2025): Added arch refactor section.

v1 (May 2025): Original publication with hand-drawn diagram.

- This document assumes familiarity with Redwood’s AI control agenda. To learn more, we recommend Redwood’s control landing page (6 min), AI safety atlas: control (5 min), this Rob Miles video (30 min) and Buck on the 80,000 hours podcast (2 hrs), in addition to the original academic paper. ↑

- Lines of human-typed code vs AI-generated code, excluding simple imports and boilerplate. TechCrunch ↑

Originally published May 2025; Updated Feb 2026

← All posts